Tag: google

An open letter to tell Google to commit to not weaponizing its technology (May 17, 2018)

Following an invitation by Prof. Lilly Irani (UCSD), I was among the first signatories of this ICRAC “Open Letter in… read more An open letter to tell Google to commit to not weaponizing its technology (May 17, 2018)

![[Séminaire #ecnEHESS] Nikos Smyrnaios “Les GAFAM : notre oligopole quotidien” (20 mars 2017, 17h)](https://www.casilli.fr/wp-content/uploads/2017/03/dislike.png)

[Séminaire #ecnEHESS] Nikos Smyrnaios “Les GAFAM : notre oligopole quotidien” (20 mars 2017, 17h)

Enseignement ouvert aux auditeurs libres. Pour s’inscrire, merci de renseigner le formulaire. Dans le cadre de notre séminaire EHESS Etudier… read more [Séminaire #ecnEHESS] Nikos Smyrnaios “Les GAFAM : notre oligopole quotidien” (20 mars 2017, 17h)

Sur France Inter (3 août, 2016)

» Ecouter “Le téléphone sonne – Notre rapport au virtuel” (41 min.) Pokemon Go, réseaux sociaux… Quel rapport entretenons-nous avec… read more Sur France Inter (3 août, 2016)

[Radio] ¿Qué es el Digital Labor? (RFI, 16 oct. 2015)

Radio RFI International interviewed me about the latest book I co-authored Qu’est-ce que le digital labor ? (INA, 2015) –… read more [Radio] ¿Qué es el Digital Labor? (RFI, 16 oct. 2015)

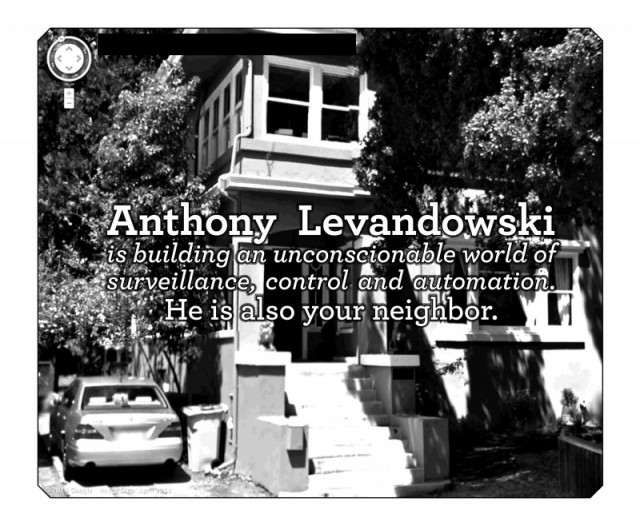

Privacy is not dying, it is being killed. And those who are killing it have names and addresses

Quite often, while discussing the role of web giants in enforcing mass digital surveillance (and while insisting that there is… read more Privacy is not dying, it is being killed. And those who are killing it have names and addresses

Google censure les gros mots : une non-nouvelle [Updated 02.12.13]

Depuis quelques jours cette nouvelle fait le tour des Internets. Le contenu des Google Hangouts sous surveillance automatisée ? Mystère… read more Google censure les gros mots : une non-nouvelle [Updated 02.12.13]

Google et son 'data center pirate' : vers une extraterritorialité fiscalement optimisée ? [Updated 01.11.2013]

Aux dernières nouvelles, Google serait en train de construire un datacenter flottant ! CNET a publié un article, amplement repris… read more Google et son 'data center pirate' : vers une extraterritorialité fiscalement optimisée ? [Updated 01.11.2013]

Qu’est-ce que le Digital Labor ? [Audio + slides + biblio]

UPDATE : Qu’est-ce que le digital labor ? est désormais un ouvrage, paru aux Editions de l’INA en 2015. Dans cet… read more Qu’est-ce que le Digital Labor ? [Audio + slides + biblio]

La BnF, Guy Debord et le spectacle schizophrène du droit d'auteur

[Mise à jour du 01 avril 2013 10h29. Ce billet a été republié sur le Huffingtonpost et traduit en anglais… read more La BnF, Guy Debord et le spectacle schizophrène du droit d'auteur

Séminaire EHESS de Dominique Cardon “Anthropologie de l’algorithme de Google” (16 mai 2012, 17h)

[UPDATE 20.05.2012 : un compte rendu très détaillé, proposant une discussion des sujets traités dans ce séminaire, est désormais disponible… read more Séminaire EHESS de Dominique Cardon “Anthropologie de l’algorithme de Google” (16 mai 2012, 17h)

![[Radio] ¿Qué es el Digital Labor? (RFI, 16 oct. 2015)](https://www.casilli.fr/wp-content/uploads/2015/10/logo-rfi-haute-def.jpg)

![Google censure les gros mots : une non-nouvelle [Updated 02.12.13]](https://www.casilli.fr/wp-content/uploads/2013/11/swearing.jpg)

![Google et son 'data center pirate' : vers une extraterritorialité fiscalement optimisée ? [Updated 01.11.2013]](https://www.casilli.fr/wp-content/uploads/2013/10/googlenautilus.jpg)