I’m rushing to finish up a numer of things before flying to Spain for a conference. So Facebook chose the wrong possible moment to do what Facebook does best – manipulate data and algorithms without giving a damn about the well-being of a billion users. Except this time it was not done for profit, but for science. Controversy ensued nonetheless.

Facebook unethical experiment: It made news feeds happier or sadder to manipulate people’s emotions.

Unfortunately, I don’t have time to write a 5,000 word essay about this. Moreover, as an academic I’m fed up with being an accessory to Big Platform storytelling. So, I point you to a couple of nice articles written by colleagues. They all make really valid points, and you should probably read them if you want to overcome the rhetoric conveyed by the press and the TV, which boils down to saying “you should be outraged because during the last 10 years you’ve been more interested in crap stories about Web celebrities, adorable dogs, and violent teenagers that we’ve been feeding you in our technology columns, and you never took the time to realize that the very mission of a medium like Facebook is to manipulate people’s feelings, opinions, and moods”.

So, without further ado, here are the recommended readings. You can use them to outsmart your geeky friends during your Summer outings.

Excerpt: “(…) it’s not clear what the notion that Facebook users’ experience is being “manipulated” really even means, because the Facebook news feed is, and has always been, a completely contrived environment. I hope that people who are concerned about Facebook “manipulating” user experience in support of research realize that Facebook is constantly manipulating its users’ experience. In fact, by definition, every single change Facebook makes to the site alters the user experience, since there simply isn’t any experience to be had on Facebook that isn’t entirely constructed by Facebook. When you log onto Facebook, you’re not seeing a comprehensive list of everything your friends are doing, nor are you seeing a completely random subset of events. In the former case, you would be overwhelmed with information, and in the latter case, you’d get bored of Facebook very quickly. Instead, what you’re presented with is a carefully curated experience that is, from the outset, crafted in such a way as to create a more engaging experience (read: keeps you spending more time on the site, and coming back more often). The items you get to see are determined by a complex and ever-changing algorithm that you make only a partial contribution to (by indicating what you like, what you want hidden, etc.). It has always been this way, and it’s not clear that it could be any other way. So I don’t really understand what people mean when they sarcastically suggest–as Katy Waldman does in her Slate piece–that “Facebook reserves the right to seriously bum you out by cutting all that is positive and beautiful from your news feed”. Where does Waldman think all that positive and beautiful stuff comes from in the first place? Does she think it spontaneously grows wild in her news feed, free from the meddling and unnatural influence of Facebook engineers?”

Excerpt: “I identify this model of control as a Gramscian model of social control: one in which we are effectively micro-nudged into “desired behavior” as a means of societal control. Seduction, rather than fear and coercion are the currency, and as such, they are a lot more effective. (Yes, short of deep totalitarianism, legitimacy, consent and acquiescence are stronger models of control than fear and torture—there are things you cannot do well in a society defined by fear, and running a nicely-oiled capitalist market economy is one of them).

The secret truth of power of broadcast is that while very effective in restricting limits of acceptable public speech, it was never that good at motivating people individually. Political and ad campaigns suffered from having to live with “broad profiles” which never really fit anyone. What’s a soccer mom but a general category that hides great variation? With new mix of big data and powerful, oligopolistic platforms (like Facebook) all that is solved, to some degree. Today, more and more, not only can corporations target you directly, they can model you directly and stealthily. They can figure out answers to questions they have never posed to you, and answers that you do not have any idea they have. Modeling means having answers without making it known you are asking, or having the target know that you know. This is a great information asymmetry, and combined with the behavioral applied science used increasingly by industry, political campaigns and corporations, and the ability to easily conduct random experiments (the A/B test of the said Facebook paper), it is clear that the powerful have increasingly more ways to engineer the public, and this is true for Facebook, this is true for presidential campaigns, this is true for other large actors: big corporations and governments.”

Excerpt: “(…) on the substance of the research, there are still serious questions about the validity of methodological tools used , the interpretation of results, and use of inappropriate constructs. Prestigious and competitive peer-reviewed journals like PNAS are not immune from publishing studies with half-baked analyses. Pre-publication peer review (as this study went through) is important for serving as a check against faulty or improper claims, but post-publication peer review of scrutiny from the scientific community—and ideally replication—is an essential part of scientific research. Publishing in PNAS implies the authors were seeking both a wider audience and a heightened level of scrutiny than publishing this paper in a less prominent outlet. To be clear: this study is not without its flaws, but these debates, in of themselves, should not be taken as evidence that the study is irreconcilably flawed. If the bar for publication is anticipating every potential objection or addressing every methodological limitation, there would be precious little scholarship for us to discuss. Debates about the constructs, methods, results, and interpretations of a study are crucial for synthesizing research across disciplines and increasing the quality of subsequent research.

Third, I want to move to the issue of epistemology and framing. There is a profound disconnect in how we talk about the ways of knowing how systems like Facebook work and the ways of knowing how people behave. As users, we expect these systems to be responsive, efficient, and useful and so companies employ thousands of engineers, product managers, and usability experts to create seamless experiences. These user experiences require diverse and iterative methods, which include A/B testing to compare users’ preferences for one design over another based on how they behave. These tests are pervasive, active, and on-going across every conceivable online and offline environment from couponing to product recommendations. Creating experiences that are “pleasing”, “intuitive”, “exciting”, “overwhelming”, or “surprising” reflects the fundamentally psychological nature of this work: every A/B test is a psych experiment.”

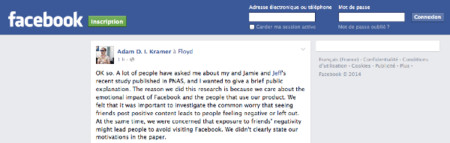

[UPDATE 29.06.2014] Just when folks in the academic community were finally coming back to their senses, the first author of the Facebook study issued an “apology” that will go down in the annals of douchebaggerdom.

“The reason we did this research is because we care about the emotional impact of Facebook and the people that use our product. We felt that it was important to investigate the common worry that seeing friends post positive content leads to people feeling negative or left out. At the same time, we were concerned that exposure to friends’ negativity might lead people to avoid visiting Facebook. We didn’t clearly state our motivations in the paper. (…) And we found the exact opposite to what was then the conventional wisdom: Seeing a certain kind of emotion (positive) encourages it rather than suppresses is.”

[Shakespearean aside: The key sentence here is “we were concerned that exposure to friends’ negativity might lead people to avoid visiting Facebook”: so this study demonstrates that if Facebook just suppresses every hint of negativity and forcefeeds users with sugar-coated unicorns, people won’t develop some kind of “diabetes of the soul”. ]

“Nobody’s posts were “hidden,” they just didn’t show up on some loads of Feed.”

[Shakespearean aside: Why, that reassur… wait a minute! Isn’t “not showing” synonymous with “hiding”?]

Having written and designed this experiment myself, I can tell you that our goal was never to upset anyone. I can understand why some people have concerns about it, and my coauthors and I are very sorry for the way the paper described the research and any anxiety it caused.

[Shakespearean aside: Wouldn’t want to overinterpret that, but he seems to say he’s sorry for the anxieties caused by the publication of the paper, not for the anxieties allegedly caused by the experiment itself…]

![In defense of Facebook | [citation needed] http://www.talyarkoni.org/blog/2014/06/28/in-defense-of-facebook/](http://kwout.com/cutout/u/z2/77/gyj.jpg)